Explainable AI (XAI) is transforming compliance by making AI decisions transparent, traceable, and justifiable. Traditional AI often acts as a "black box", leaving compliance teams and regulators in the dark about how decisions - like flagging transactions or denying credit - are made. XAI solves this by explaining the "why" behind decisions, ensuring compliance with regulations like the EU AI Act, GDPR, and U.S. AML laws.

Key Takeaways:

- Regulatory Compliance: XAI aligns with laws requiring transparency, such as the "right to explanation" under GDPR.

- Fraud Detection: XAI improves fraud detection accuracy by up to 99%, reducing false positives and investigation time.

- Auditability: XAI creates tamper-proof audit trails, documenting every AI-driven decision for regulatory reviews.

- Use Cases: It enhances anti-money laundering (AML) efforts, Know Your Customer (KYC) processes, and credit decision-making.

While XAI boosts trust and efficiency, challenges like balancing accuracy with interpretability and managing costs remain. Startups and financial institutions can begin by applying XAI to high-control processes and integrating it with human oversight for better results.

How Hawk Revolutionizes Financial Crime Detection with Explainable AI | Amazon Web Services

sbb-itb-17e8ec9

Why Explainable AI Matters for Regulatory Compliance

U.S. financial institutions operate in a regulatory environment that demands more than just accurate AI predictions - it requires clear evidence of how those predictions are made. Federal regulators may embrace innovation, but they won’t hesitate to penalize insufficient documentation. If an AI system lacks detailed reasoning and proper approvals, institutions risk facing regulatory actions.

The statistics are telling. A staggering 82% of financial institutions report regulatory challenges when using traditional AI models, and 73% highlight the need for better explainability in their AI systems. This isn’t just a technical hurdle - it’s a compliance risk that could result in fines, enforcement actions, or operational restrictions.

Meeting Regulatory Standards

Explainable AI (XAI) plays a key role in meeting the documentation and validation requirements set by U.S. financial regulators. For example, guidance like SR 11-7 and OCC 2011-12 from the Federal Reserve and OCC mandates an "effective challenge" process and thorough documentation for any AI or machine learning model influencing decisions. XAI provides the tools to meet these demands, offering the detailed documentation and outcome analysis that regulators require.

For fair lending compliance under the Equal Credit Opportunity Act (ECOA), XAI techniques like SHAP and LIME can pinpoint the exact factors behind a credit denial. This allows lenders to issue the legally required adverse action notices and identify potential algorithmic bias before it escalates into a regulatory problem. Similarly, the SEC and FINRA require firms to oversee AI-generated communications and predictive analytics under market conduct rules.

U.S. regulators are also aligning with global frameworks like the EU AI Act and the NIST AI Risk Management Framework, which emphasize transparency and human oversight. Recognizing this shift, 91% of financial institutions plan to increase investments in XAI. Explainability is no longer optional - it’s a regulatory necessity. Beyond compliance, this transparency enhances fraud detection and builds trust in AI systems.

Auditability and Transparency

XAI doesn’t just help meet regulatory standards - it ensures decisions are fully auditable and transparent. By creating "tamper-evident" audit trails, XAI provides a detailed record of every AI-driven decision, including inputs, outputs, decision logic, and approvals. These records allow institutions to "replay" decisions during audits or internal reviews, confirming that the system operated within acceptable boundaries.

"Auditability provides transparency, allowing financial institutions to track AI-driven actions, validate findings, and maintain accountability in compliance workflows." – Lucinity

A real-world example comes from HSBC, which implemented an XAI-enhanced anti-money laundering (AML) system between 2019 and 2020. Using gradient boosting models and SHAP values, the system produced audit trails that allowed compliance officers to validate suspicious activity alerts with clear, documented reasoning. This approach met Bank Secrecy Act requirements for defensible Suspicious Activity Report (SAR) filings. The results? HSBC reduced false positives by 37%, improved SAR filing accuracy by 45%, and saved roughly $100 million annually in compliance costs.

For AML compliance, XAI can explain alerts by detailing the specific factors - such as unusual transaction patterns, geographic anomalies, or behavioral deviations - that triggered them. This level of detail is essential for preparing accurate SARs that can withstand regulatory scrutiny. DBS Bank provides another success story: its XAI-based risk assessment system, powered by SHAP values, achieved a 90% faster regulatory reporting rate and cut manual review time by 70%.

On top of compliance benefits, XAI delivers financial advantages. Institutions can achieve a 3.2x return on investment by cutting compliance costs, reducing regulatory findings, and streamlining operations. More importantly, XAI transforms AI from a potential compliance risk into a reliable, auditable tool that supports fraud detection and compliance monitoring systems.

Key Use Cases of Explainable AI in Fraud Detection and Compliance

Explainable AI (XAI) is reshaping how financial institutions tackle fraud, monitor money laundering, and verify customer identities. Instead of relying on AI systems that simply flag transactions without context, XAI provides clear, actionable reasons behind each alert. This transparency empowers compliance teams to make faster, more confident decisions.

In 2022, global credit card fraud losses hit $33.5 billion, with estimates suggesting they could climb to $43.47 billion by 2028. Despite this, as of 2023, only 22% of businesses reported using AI in their fraud detection efforts. This gap highlights a major opportunity for companies - especially startups and high-growth businesses - to leverage AI to scale compliance without needing to significantly expand their teams.

Fraud Detection and Prevention

XAI goes beyond merely flagging suspicious transactions; it explains the "why" behind each alert. For example, a stacking ensemble model combining XGBoost, LightGBM, and CatBoost, paired with XAI techniques, achieved a 99% accuracy rate and a 0.99 AUC-ROC score on real-world transaction data. This model identified specific features - like "C14" count features or "V12" engineered features - that played a key role in fraud detection. When an alert is triggered, investigators receive a detailed breakdown of contributing factors, such as unusual spending patterns, geographic inconsistencies, or sudden transaction spikes.

In the U.S., XAI also helps meet regulatory requirements. For instance, under the Fair Credit Reporting Act (FCRA), financial institutions must provide clear explanations when denying transactions. Tools like LIME (Local Interpretable Model-Agnostic Explanations) generate transaction-specific insights that compliance teams can use to craft customer-facing communications.

"XAI moves beyond simply flagging suspicious transactions to explaining why they are considered suspicious." – Lumenova AI

XAI's transparency extends to other compliance areas, including anti-money laundering (AML) efforts, where it provides clear evidence for flagged activities.

Anti-Money Laundering (AML) Monitoring

AML compliance is another area where XAI proves its value. These systems adapt to real-time updates in sanctions lists - sometimes multiple times a day. By organizing large datasets into behavioral models, XAI identifies suspicious patterns, such as sudden changes in transaction volumes or deviations from a customer's typical behavior.

When an account is flagged, XAI generates detailed evidence narratives outlining the triggers. These narratives create clear audit trails, strengthening the defensibility of Suspicious Activity Report (SAR) filings.

With compliance costs rising globally - up 15% in the UK, 12% in the U.S., and 9% in Singapore and Australia - XAI offers a way to manage these increasing expenses more effectively. By 2025, AI adoption in AML activities is expected to reach 90%, and leveraging Generative AI copilots could improve fraud detection accuracy by as much as 60–99%. Some XAI systems have maintained accuracy rates between 94% and 98% when tested against validated datasets.

The real strength of XAI lies in its ability to back every recommendation with traceable, transparent evidence. This enables compliance teams to review AI-generated summaries, refine them if necessary, and submit reports with full confidence in their accuracy. This same level of transparency also benefits Know Your Customer (KYC) processes.

Know Your Customer (KYC) Processes

XAI’s impact extends to KYC processes by providing detailed and traceable insights that pinpoint discrepancies. Modern systems integrate KYC records, transactional data, and behavioral patterns to create a comprehensive "Customer 360°" view. XAI then analyzes this data to identify issues like mismatched addresses, unusual onboarding activities, or behaviors that don’t align with stated business purposes.

For startups and rapidly expanding businesses, this capability is critical. The surge in digital filings - 13.1 million filings at Companies House in a single year - has increased the risk of opaque corporate structures. XAI helps uncover complex entity arrangements that may require deeper investigation.

Additionally, XAI supports ongoing KYC monitoring. As customer behavior evolves, the system continuously reassesses risk profiles and flags significant changes for review. This proactive approach aligns with regulatory expectations, helping institutions address potential risks before they escalate into major compliance challenges.

For platforms like Lucid Financials, which cater to startups managing multi-entity structures and founder equity scenarios, XAI-enhanced KYC ensures that compliance keeps pace with business growth. Early detection of inconsistencies allows finance teams to resolve issues before they turn into audit findings or regulatory violations.

XAI Techniques Used in Compliance Systems

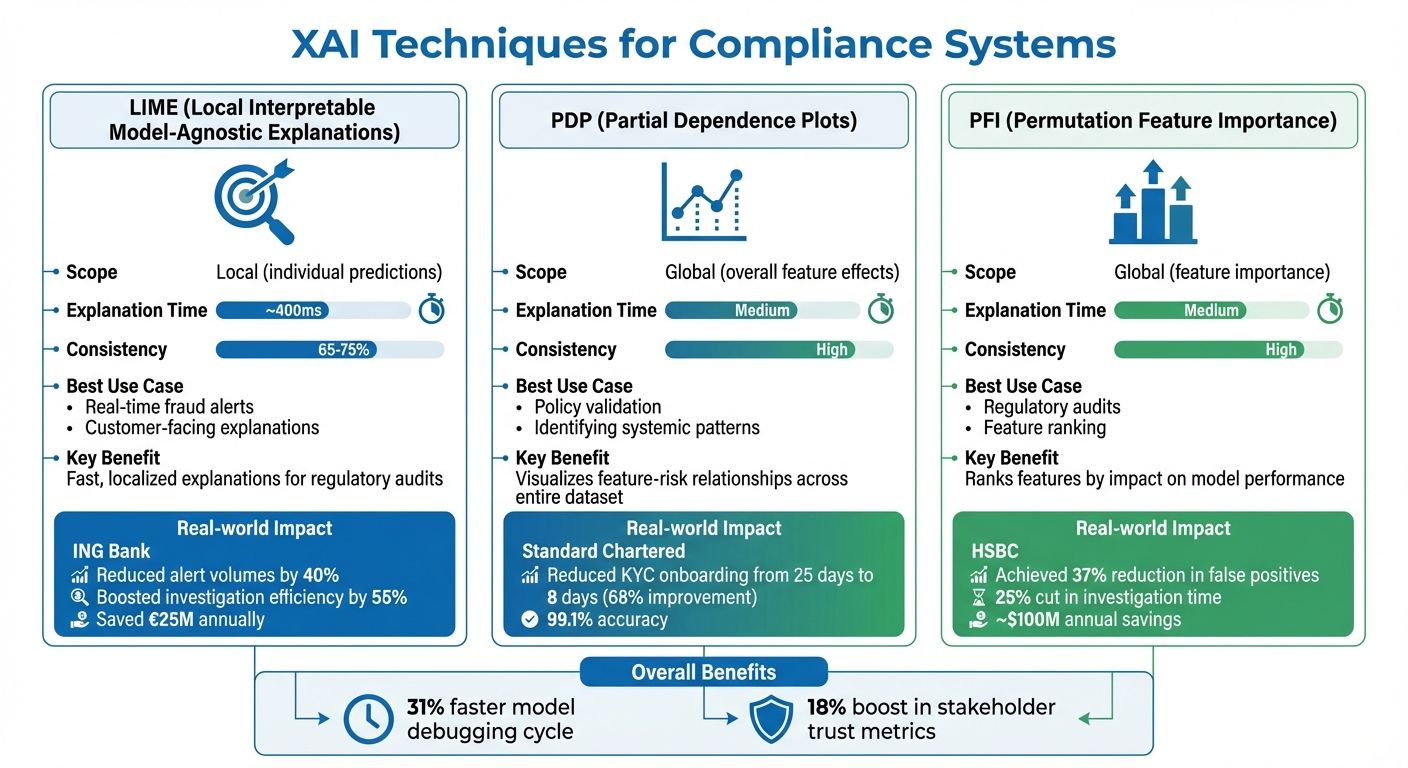

XAI Techniques in Compliance: LIME, PDP, and PFI Comparison

Explainable AI (XAI) systems use specialized methods to make complex machine learning decisions easier to understand. These tools help compliance teams see why an AI flagged a transaction, the factors that influenced a risk score, and how the model behaves in different situations. Three key methods - LIME, PDP, and PFI - are commonly used in compliance-focused XAI systems.

Local Interpretable Model-Agnostic Explanations (LIME)

LIME provides quick, localized explanations, which are especially useful for regulatory audits. It simplifies the AI model's decision-making process for a specific instance by creating a local approximation. For example, if a transaction is flagged as suspicious, LIME tweaks the input data - changing certain feature values randomly - to figure out which changes would alter the model's decision. This process results in a linear model that explains the decision-making process for that specific case.

"Intuitively, an explanation is a local linear approximation of the model's behaviour." – Marco Tulio Ribeiro

In 2019–2020, ING Bank used a system combining deep learning with LIME and SHAP to monitor transactions. This setup reduced alert volumes by 40%, boosted investigation efficiency by 55%, and saved €25 million annually. LIME is fast, generating explanations in about 400 milliseconds, making it ideal for real-time fraud detection where investigators need immediate context. However, because it relies on random sampling, the explanations can vary slightly (65–75% consistency). To ensure reproducibility during audits, setting a random seed is crucial.

Partial Dependence Plots (PDP)

PDP provides a broader view of how a model behaves, offering insights into its alignment with institutional risk policies. While LIME focuses on individual decisions, PDP visualizes the relationship between a specific feature (like transaction frequency or account age) and the predicted risk score across the entire dataset. For example, a PDP might show that as transaction frequency increases from 5 to 50 per month, the fraud risk score rises steadily.

This global perspective helps confirm that the model's logic matches the organization's risk policies. Standard Chartered improved its KYC process by using explainable risk scoring with Random Forest models and LIME. The result? Customer onboarding times dropped from over 25 days to just 8 days - a 68% improvement - while maintaining 99.1% accuracy in risk classification. One drawback of PDP is that it assumes features are independent. If two features are highly correlated (like transaction amount and account balance), the plots might show unrealistic combinations, potentially misleading reviewers.

Permutation Feature Importance (PFI)

PFI ranks features based on their importance to the model, offering a comprehensive view of how each feature impacts overall performance. It works by shuffling a feature's values - breaking its connection to the outcome - and measuring the resulting drop in accuracy. For instance, if shuffling "transaction location" significantly reduces accuracy, it indicates that the feature plays a key role in the model's decisions.

This method is particularly valuable for regulatory audits. HSBC implemented an XAI-enhanced anti-money laundering (AML) system using gradient boosting models and SHAP values, achieving a 37% reduction in false positives, a 25% cut in investigation time, and saving around $100 million annually. When combined with SHAP or PDP, PFI gives regulators a complete view of the model's behavior, ensuring it meets transparency standards.

| Technique | Scope | Explanation Time | Consistency | Best Use Case |

|---|---|---|---|---|

| LIME | Local (individual predictions) | ~400ms | 65–75% | Real-time fraud alerts and customer-facing explanations |

| PDP | Global (overall feature effects) | Medium | High | Policy validation and identifying systemic patterns |

| PFI | Global (feature importance) | Medium | High | Regulatory audits and feature ranking |

Organizations that adopt XAI techniques report a 31% faster model debugging cycle and an 18% boost in stakeholder trust metrics. For startups and growing companies, these tools provide the transparency needed to meet regulatory requirements while maintaining the performance of advanced AI systems. Together, LIME, PDP, and PFI offer the clarity compliance teams need for both immediate decision-making and in-depth audits.

Benefits and Challenges of Explainable AI in Compliance

Explainable AI (XAI) is making waves in regulatory compliance, offering a mix of opportunities and hurdles. Financial institutions are seeing a 3.2x return on investment with XAI systems, and 91% are planning to boost their XAI budgets. These figures highlight the technology's ability to cut false positives, speed up investigations, and meet regulatory standards - key factors in fraud detection and compliance monitoring. But to unlock these advantages, organizations must address technical challenges, resource demands, and performance trade-offs.

One of XAI's standout benefits is regulatory alignment. By providing clear, documented AI decisions, XAI helps institutions meet U.S. and international regulatory standards. This is a big deal, considering non-compliance fines can reach $35 million or 7% of annual turnover. Beyond avoiding penalties, XAI fosters trust by offering clear explanations for decisions like loan denials or flagged transactions. This transparency can reduce customer dissatisfaction and improve relationships with regulators.

"Financial institutions need to move beyond black-box AI to explainable models that can withstand regulatory scrutiny while maintaining performance advantages." – McKinsey & Company

However, XAI isn't without its challenges. A major hurdle is the performance-interpretability trade-off. High-performing black-box models, like deep learning, often require additional tools to explain their decisions, which can slow down processes. On the flip side, simpler models, such as decision trees, are easier to interpret but may sacrifice predictive accuracy. There's also a lack of standardized metrics to assess explanation quality, leading to inconsistent compliance reporting across teams. And let’s not forget the costs: organizations may spend $7,350 per month on AWS infrastructure and as much as $90,000 per month on specialized talent to implement XAI.

Comparison of Benefits and Challenges

The table below breaks down the key advantages and obstacles of XAI in compliance:

| Benefit Category | Advantage | Challenge |

|---|---|---|

| Regulatory Alignment | Supports compliance with EU AI Act and GDPR "right to explanation" rules. | Documenting decisions for complex models like deep learning is highly challenging. |

| Trust & Transparency | Builds trust with customers and regulators by providing clear reasoning. | Risk of "explanation drift", where explanations fail to align with model logic over time. |

| Operational Efficiency | Cuts false positives in anti-money laundering (AML) and fraud detection by 30–50%. | High upfront costs for infrastructure and expert talent. |

| Risk Management | Helps detect biases and validates models proactively. | Balancing accuracy and interpretability remains a significant challenge. |

These factors create a complex landscape for XAI adoption in compliance, paving the way for deeper discussions on its role in financial automation in the next section.

Implementing Explainable AI in Financial Automation

Bringing Explainable AI (XAI) into financial compliance processes can be streamlined, especially for startups. The trick lies in finding the right balance between speedy automation and thorough human oversight, all while maintaining clear documentation. AI-driven financial tools can process tasks up to 100 times faster than manual methods - but only when paired with robust safeguards and governance frameworks.

The best way to roll out XAI is to start small. Focus on a single, high-control process - like handling accounts payable exceptions or monthly reconciliations - as a pilot. This lets you test explainability features, fine-tune approval workflows, and build trust with your team and auditors before scaling to more intricate use cases. Next, let’s explore how human-in-the-loop systems play a key role in this process.

Human-in-the-Loop Systems

Human-in-the-loop (HITL) systems ensure that AI decisions are never made in isolation. Every major financial decision should include clear approval steps, escalation protocols, and a record of reviewer identities with timestamps. This creates a detailed audit trail, satisfying regulatory requirements while also catching errors that AI might overlook.

"Human oversight must be explicit: approval steps, escalation thresholds, and documented reviewer identities with timestamps." – Ameya Deshmukh

Here’s how HITL works: AI flags transactions or decisions that exceed a set confidence threshold for human review. For example, if an AI system identifies a transaction as potentially fraudulent with a 75% confidence level, it automatically routes the case to a compliance officer. The officer reviews the AI’s reasoning and then either approves, rejects, or requests more context. This entire process, including the reviewer’s identity and timestamp, is logged for future audits.

The payoff? Audits become much less time-consuming. Companies can see a 30–60% reduction in controls testing hours, as evidence collection becomes automated and ongoing rather than a last-minute scramble at year-end. For startups facing their first SOC 2 audit or preparing for due diligence, this kind of documentation can be a game-changer. And when paired with integrated platforms, startups can access real-time, explainable insights tailored to their needs.

Integration with Startup Financial Platforms

Startups thrive with financial platforms that embed XAI features. Take Lucid Financials, for instance. It combines AI automation with expert oversight to deliver clean financial records in just seven days, all while maintaining SOC 2–compliant data environments. The platform uses AI to forecast finances, identify R&D tax credits, and generate investor-ready reports - all with built-in explainability.

"Just want to say props to the whole Lucid team. We pulled up the Lucid platform in a meeting with a VC and they were extremely impressed. His jaw just about dropped when he saw October was even up to date." – Giorgio Riccio, Founder of Lumino Technologies

Lucid’s real-time accuracy comes from AI that continuously scans transactions, categorizes them, and flags anomalies for human review through Slack. While the AI handles categorization and reconciliation at scale, expert accountants ensure everything checks out. Founders also get 24/7 support via Slack, allowing them to ask about burn rate, runway, or tax compliance and receive instant, AI-backed insights verified by human experts.

For compliance-heavy tasks like R&D tax credits, Lucid’s AI identifies qualifying expenses across payroll, contractors, and cloud infrastructure. It then generates detailed documentation explaining why each expense qualifies, showing how XAI supports regulatory transparency.

"Lucid turned our bookkeeping and taxes from a headache into a simple, reliable process. Their CFO insights give us clarity to plan growth with confidence - it feels like having a full finance team on demand." – Aviv Farhi, Founder and CEO of Showcase

When choosing an AI-powered financial platform, ensure it offers SOC 2 Type II certification and provides real-time explainability for every automated decision. This ensures your financial data stays secure while meeting the transparency standards regulators require.

Conclusion

Explainable AI (XAI) transforms compliance from a reactive chore into a seamless, transparent part of daily operations. By offering clear reasoning behind every decision - whether it’s flagging unusual transactions, identifying R&D tax credits, or generating reports for investors - XAI ensures organizations meet strict regulatory standards like the EU AI Act, GDPR, and U.S. AML laws. This level of clarity not only satisfies regulators but also drives measurable improvements in performance.

"AI changes regulatory compliance by making it continuous, explainable, and auditable - embedded in day‑to‑day finance work." – Ameya Deshmukh, Everworker

The numbers speak for themselves: AI-powered fraud detection can boost accuracy by 60–99%, while XAI reduces false positives by 30–50% and improves investigation efficiency by 40–60%. With AI adoption in AML-related activities expected to reach 90% by 2025, startups leveraging XAI are better equipped to meet evolving regulatory demands. These benefits highlight XAI’s pivotal role in modern compliance frameworks.

For fast-growing companies, the real game-changer is operational confidence. Imagine walking into a VC meeting with real-time, explainable financial data at your fingertips - just as Giorgio Riccio experienced with Lucid Financials. That kind of transparency doesn’t just check a compliance box; it becomes a strategic advantage that wows investors. XAI transforms financial systems from being a back-office necessity into a competitive edge.

Start small - focus on one high-control process, integrate human oversight, and ensure every AI decision includes a detailed audit trail. Whether preparing for a SOC 2 audit or scaling toward Series C funding, XAI provides the transparency, speed, and trust needed to grow without cutting corners. By embedding XAI into your operations, compliance evolves into a strategic tool that drives growth and builds trust.

FAQs

What makes an AI model “explainable” in compliance?

An AI model is considered "explainable" in compliance when it can clearly show how it arrives at its decisions. This means providing transparent reasoning, such as evidence, sources, or traces of its decision-making process.

Explainability is especially important in areas like fraud detection, where decisions need to be defensible and auditable. By meeting these standards, organizations can satisfy regulatory requirements, lower the risks associated with audits, and strengthen trust in their AI systems.

How do regulators evaluate XAI explanations during audits?

Regulators evaluate explainable AI (XAI) explanations by examining how clearly and traceably AI systems justify their decisions. They ensure that explanations align with established frameworks such as SR 11-7, SS1/23, or the EU AI Act.

In addition, regulators focus on the presence of consistent and well-documented processes. This includes aspects like control monitoring and anomaly detection, which help ensure that AI decisions are not only understandable but also defensible and adhere to legal and ethical requirements.

How can startups roll out XAI without slowing down operations?

Startups can integrate explainable AI (XAI) into their operations without causing disruptions by embedding it into their existing workflows and leveraging automation. Selecting XAI tools that align smoothly with current systems ensures clarity and openness without interfering with daily operations. Additionally, using AI solutions designed to meet compliance requirements and support data localization simplifies regulatory tasks, cutting down on manual effort and potential delays. This strategy helps maintain productivity while building trust and improving transparency.