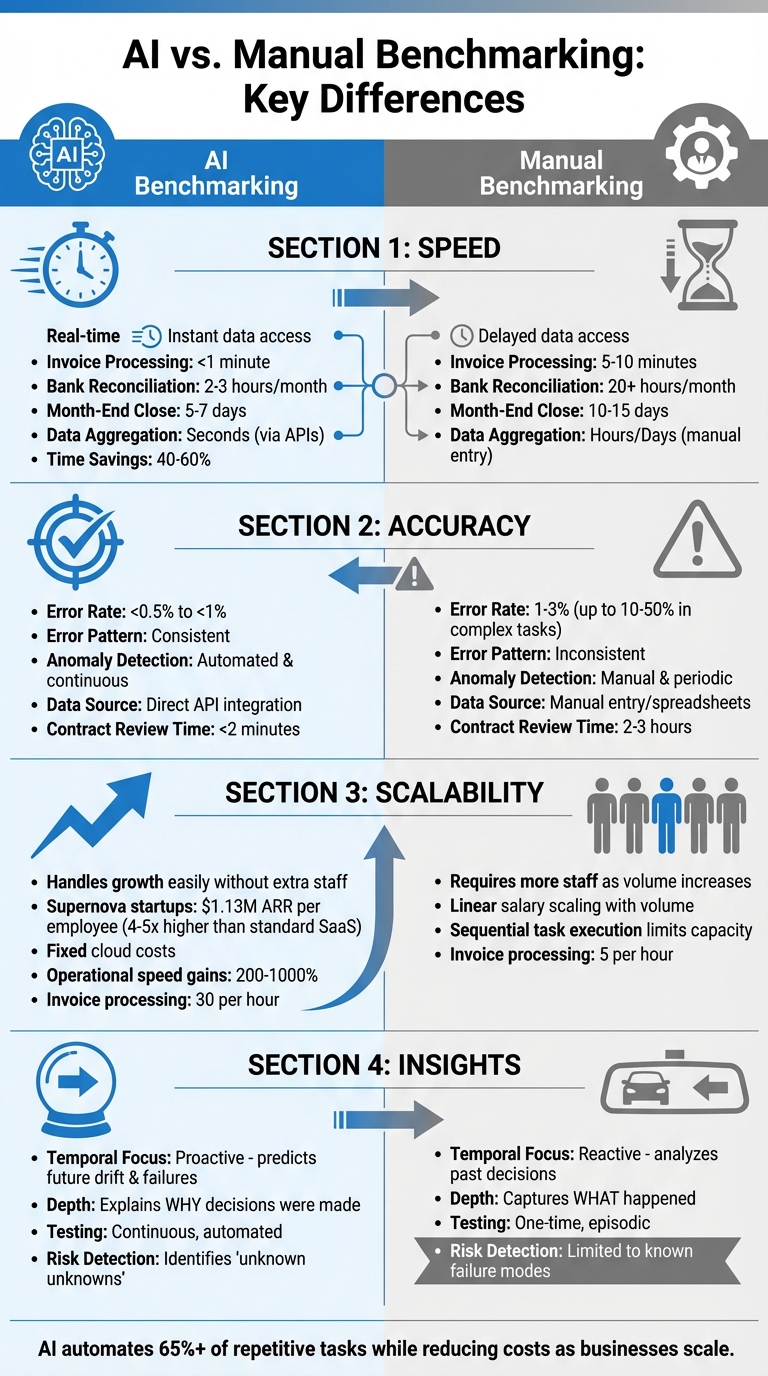

AI benchmarking is faster, more accurate, and scales better than manual methods. Here's why:

- Speed: AI processes data in seconds, while manual methods take hours or days. For example, AI can process an invoice in under 1 minute compared to 5–10 minutes manually.

- Accuracy: AI reduces error rates to under 0.5%, while manual processes have error rates of 1–3% or higher for complex tasks.

- Scalability: AI handles growing workloads without extra staff, unlike manual methods that require more hires as volume increases.

- Insights: AI predicts future trends and identifies risks, while manual methods focus only on past performance.

Quick Comparison

| Feature | AI Benchmarking | Manual Benchmarking |

|---|---|---|

| Speed | Real-time processing | Time-consuming |

| Error Rate | <0.5% | 1–3% or higher |

| Scalability | Handles growth easily | Requires more staff |

| Insights | Predictive and detailed | Reactive and limited |

AI benchmarking transforms decision-making by providing faster, more reliable, and scalable solutions for startups and businesses.

AI vs Manual Benchmarking: Speed, Accuracy, Scalability & Insights Comparison

Speed: Real-Time AI vs. Time-Intensive Manual Methods

How AI Delivers Faster Results

When it comes to benchmarking, speed is everything. AI simplifies and accelerates data processing, making it a game-changer for businesses. By integrating with APIs from sources like bank feeds and payment processors, AI eliminates the need for manual data entry entirely. Tools equipped with Optical Character Recognition (OCR) can scan invoices or documents and turn them into organized datasets in just seconds. This automation slashes the time required for tasks - processing an invoice, for instance, drops from 5–10 minutes to less than 1 minute, while monthly bank reconciliations go from over 20 hours to just 2–3 hours.

The efficiency AI brings doesn’t stop at individual tasks. Month-end close cycles, which traditionally take 10–15 days, can now be completed in just 5–7 days. In fact, businesses adopting automated financial workflows report saving 40–60% of their time on average. The table below breaks down these time savings.

Why Manual Methods Take Longer

Manual benchmarking, on the other hand, is a slow and tedious process. It involves repetitive tasks like entering data line by line into spreadsheets and manually reconciling accounts. Every invoice must be read, categorized, and entered by hand. Comparing contracts? That means poring over PDFs or Word documents and manually highlighting differences - a process that can take days or even weeks. While AI provides real-time insights, manual methods often result in outdated reports, making it clear why automation is the faster choice.

Speed Comparison Table

| Task | AI-Driven Timeframe | Manual Timeframe |

|---|---|---|

| Invoice Processing | Under 1 minute | 5–10 minutes |

| Bank Reconciliation | 2–3 hours per month | 20+ hours per month |

| Month-End Close | 5–7 days | 10–15 days |

| Data Aggregation | Seconds (via APIs) | Hours/Days (manual entry) |

sbb-itb-17e8ec9

Accuracy: Error Reduction with AI vs. Human Limitations

How AI Improves Precision and Detects Errors

AI-driven benchmarking has a major advantage: it removes the risk of manual data entry errors. By pulling data directly from APIs, AI minimizes human mistakes, cutting error rates to under 0.5%, compared to the 1–3% typically seen in manual processes. This isn’t just about working faster - it’s about getting things right. AI ensures that journal entries balance properly and that transactions are mapped correctly to predefined charts of accounts.

Another standout feature of AI is its automated anomaly detection. Instead of relying on monthly reviews, AI continuously monitors transactions, flagging anything that deviates from historical patterns or established norms. It can catch subtle issues - like rephrased risks in contracts or unnoticed omissions - that a human reviewer might miss during a manual check. For example, a 2026 study by DualEntry Labs tested 19 AI models on 101 real-world accounting tasks. The top performer, Gemini 3.1 Pro, achieved 66.0% accuracy on complex workflows, while the lowest-scoring model managed only 19.8%. AI’s predictable error patterns also make it easier to fine-tune models and improve performance.

"Large language models are powerful drafting tools. But finance doesn't run on drafts - it runs on validated records." - Woosung Chun, CFO, DualEntry

In contrast, manual methods often fail to match this level of precision, especially when errors are unpredictable.

Common Errors in Manual Benchmarking

Manual benchmarking is prone to errors that are both random and difficult to predict. Typos, spreadsheet formula mistakes, and misclassifications are common, particularly when reviewers are tired or under tight deadlines. These mistakes are inconsistent, making them harder to identify and correct before they impact financial reports.

The challenge becomes even greater when dealing with document-heavy tasks. For example, when reviewing contracts or analyzing complex spreadsheets, humans often miss "silent omissions" - such as overlooked renewals or subtle changes to clauses. These errors can have a ripple effect, disrupting balances, delaying month-end closes, and attracting scrutiny during audits.

Accuracy Comparison Table

Here’s a quick breakdown of the differences between AI-driven and manual accuracy:

| Factor | AI-Driven Accuracy | Manual Accuracy |

|---|---|---|

| Error Rate | <0.5% to <1% | 1–3% (up to 10–50% in complex tasks) |

| Error Pattern | Consistent | Inconsistent |

| Anomaly Detection | Automated and continuous | Manual and periodic |

| Data Source | Direct API integration | Manual entry/spreadsheets |

| Review Time (Contracts) | <2 minutes | 2–3 hours |

Scalability: AI's Flexibility vs. Manual Resource Constraints

How AI Scales with Startup Growth

AI benchmarking is built to grow alongside your business. It can pull data from multiple sources, manage enormous datasets with real-time financial insights, and handle increasing transaction volumes - all without needing more staff to keep things running smoothly.

Here’s a standout stat: AI-driven "Supernova" startups average $1.13M in ARR per full-time employee. That’s 4–5 times higher than standard SaaS benchmarks. These companies can skyrocket from $40M ARR in their first year to $125M ARR in the second. How? AI’s ability to translate business logic into code slashes implementation times by as much as 90%.

"There is no cloud without AI anymore." - Bessemer Venture Partners

AI also excels at managing complexities that would overwhelm manual processes. It can automatically process unstructured data, align different data schemas, and maintain context across thousands of transactions. For fast-growing startups, this means their benchmarking systems can expand without constant adjustments or the need to hire more people. This scalability is a game-changer compared to the limitations of manual methods.

Why Manual Methods Struggle to Scale

Manual benchmarking, on the other hand, struggles to keep up. As transaction volumes grow, so does the need for more personnel. Doubling your workload? You’ll need to double your team. This reliance on sequential task execution not only caps capacity but also drives up hiring costs and adds layers of management complexity.

With manual systems, salary expenses increase in direct proportion to transaction volume. Unlike AI’s fixed cloud costs, this linear scaling of expenses makes manual benchmarking a poor fit for startups experiencing rapid growth. In contrast, AI effortlessly keeps pace with expansion, while manual methods quickly hit their limits in dynamic, fast-moving environments.

Insights Quality: Predictive Analytics vs. Historical Analysis

When it comes to decision-making, the depth and quality of insights often set the stage for success. Building on earlier discussions about speed, accuracy, and scalability, this section dives into how AI-driven tools stack up against manual methods in delivering actionable insights.

AI-Powered Forecasting and Scenario Modeling

AI doesn’t just report what happened in the past - it predicts what’s coming. By detecting trends and shifts early, it provides warnings about potential failures before they occur. This forward-thinking approach is invaluable in staying ahead of the curve.

One of AI’s standout features is its ability to explain the reasoning behind decisions. This concept, often referred to as "observability", offers transparency into how results are achieved. As Conor Bronsdon, Head of Developer Awareness at Galileo, explains:

"Agent systems need observability because their behavior emerges from interactions between components rather than following predictable code paths".

AI also excels at catching subtle issues that manual methods often miss. For example, it can spot problems like "sandbagging", where models are intentionally underperforming, or "safetywashing", where safety scores are misleadingly tied to general capabilities. A striking case involved an X-ray classification model, which saw its performance drop by over 20% once spurious cues, like chest drains, were removed from the dataset. This level of scrutiny and validation is nearly impossible to achieve with traditional methods.

Manual Methods: Backward-Looking Analysis

Manual benchmarking, on the other hand, relies solely on historical data and predefined testing logic. While it can document past trends effectively, it struggles to identify emerging risks or adapt to unforeseen scenarios. This backward-looking approach often leaves businesses reacting to problems only after they’ve caused damage.

The reactive nature of manual methods is a significant limitation. By focusing on known failure modes and fixed test scenarios, they miss the "unknown unknowns" - risks that haven’t been previously identified. This gap underscores why AI’s proactive, comprehensive insights are increasingly favored.

Insights Quality Comparison Table

| Insight Feature | AI-Driven Benchmarking | Manual Methods |

|---|---|---|

| Temporal Focus | Proactive: Predicts future drift and failures | Reactive: Analyzes past decisions |

| Depth of Analysis | Explains why decisions were made | Captures what happened |

| Testing Logic | Continuous, automated testing | One-time, episodic testing |

| Risk Detection | Identifies "unknown unknowns" | Limited to known failure modes |

When Startups Should Adopt AI Benchmarking

Startups often face a tipping point where manual benchmarking starts to hold them back. If your team is drowning in vendor contracts or invoices, it's a sign that manual processes are no longer cutting it. At this stage, switching to AI benchmarking becomes a necessity. Why? Because the costs and inefficiencies of manual work quickly outweigh the investment in automation. When the numbers stop adding up, it’s time to make the leap.

AI benchmarking doesn’t just save time - it transforms how quickly you can act. For example, manual document processing takes 10–30 minutes, while AI can handle the same task in just 1–2 seconds. That’s the difference between staying ahead and constantly playing catch-up.

"As your organization grows and scales, processes become more cumbersome and more complex. Managing this without the help of artificial intelligence means more mistakes and admin resources and less efficiency and visibility"

Accuracy is another critical factor. Manual error rates, often around 10–15%, can seriously hurt your bottom line. AI systems, on the other hand, can achieve accuracy rates close to 99%. Plus, manual data entry costs a business an average of $28,500 per employee annually. For any startup aiming to grow, automation isn’t just helpful - it’s essential.

Given these challenges, adopting AI-driven solutions can help startups streamline operations and focus on growth.

How Lucid Financials Supports Startup Benchmarking

Lucid Financials simplifies the shift to AI benchmarking with a comprehensive financial platform. Instead of juggling multiple tools for bookkeeping, tax preparation, and forecasting, startups can achieve clean books in just seven days. The platform uses AI for real-time updates and reconciliation, and even integrates with Slack. This means founders can instantly get answers about runway, spending, or performance - no need to wait for monthly reports or scheduled calls.

The platform also shines when it comes to investor-ready reporting. Preparing for funding rounds becomes far less stressful, as Lucid generates board-ready reports and forecasts with a single click. It benchmarks your financial data against market standards, giving you clear insights into whether your terms and metrics align with industry expectations. This is exactly the kind of transparency investors look for during due diligence.

What truly sets Lucid apart is its human-in-the-loop approach. While AI handles repetitive tasks like transaction matching and anomaly detection, experienced finance professionals review the outputs. This ensures the speed and scale of automation are paired with the judgment and strategic insights that only humans can provide.

Best Times to Switch to AI Benchmarking

Certain milestones signal it’s time to rethink your benchmarking methods. One major trigger is preparing for a funding round. Investors demand clean, real-time data, and manual processes simply can’t deliver the precision and polish needed under tight deadlines.

Another clear indicator is when your operations are scaling faster than your team can keep up. If your finance team can’t handle the increasing volume of data, it’s time for automation. Companies report operational speed gains of 200% to 1000% after adopting AI, with automated workflows processing 30 invoices per hour compared to just five manually - a 70% to 80% improvement in efficiency.

Lastly, consider switching when administrative work eats into strategic time. If your team spends 15–20 minutes per expense claim chasing receipts and documents, they’re not focusing on higher-value tasks like cash flow forecasting. AI can flag out-of-policy transactions before they become issues, helping you shift from reactive problem-solving to proactive planning. With automation, startups can scale efficiently while maintaining the rigor of data-driven financial strategies.

Conclusion

AI benchmarking is changing the game for startups by improving speed, accuracy, scalability, and the quality of insights. Moving away from spreadsheets and manual workflows to AI-driven platforms has a transformative effect on how startups operate.

AI platforms can handle over 65% of repetitive tasks related to data management, analytics, and decision-making processes. This level of automation allows teams to focus on more strategic priorities. One of the biggest benefits is in cost efficiency. While manual benchmarking grows costlier with each new hire, AI keeps marginal costs lower as your business scales.

"AI primarily replaces tasks, not roles. Most organizations reassign human effort to oversight, analysis, and decision-making rather than eliminate positions outright." - Samta.ai

This shift doesn't just save money - it changes how teams work. For example, finance teams can step away from routine tasks and focus on higher-level responsibilities like strategic planning, forecasting, and driving growth. This evolution empowers teams to contribute more meaningfully to a startup's success.

A great example of this transformation is Lucid Financials. By blending AI-powered automation with expert human oversight, Lucid Financials delivers precise results quickly. Startups benefit from clean financial records in just seven days, real-time insights via Slack, and investor-ready reports on demand. For startups looking to scale efficiently, adopting AI benchmarking isn’t just an option - it’s a necessity.

FAQs

What data sources does AI benchmarking need?

AI benchmarking depends on using standardized tasks and datasets to measure performance accurately. These tools include a mix of diverse test sets, challenge questions, and well-defined evaluation metrics. The goal? To ensure objective, ground-truth answers that allow for a clear assessment of specific capabilities. This structured approach helps evaluate how well an AI system performs on targeted tasks.

How do you validate AI results before using them?

To ensure AI results are reliable, start with reviewing data quality - make sure the inputs are precise and relevant. Then, cross-check outputs by comparing them against manual calculations or established benchmarks. Regular testing is essential to catch errors or biases early on.

It's also important to have governance protocols in place, keep an eye out for model drift, and thoroughly document your validation processes. These steps ensure your AI insights remain dependable and compliant, making them suitable for informed decision-making.

When is it worth switching from manual to AI benchmarking?

Switching to AI benchmarking makes sense when the advantages - like speedier processing, improved precision, and scalable insights - surpass the drawbacks of manual approaches. AI has the potential to slash processing times, cut costs, and streamline decision-making. This shift is particularly beneficial for businesses dealing with large-scale or data-heavy operations, where manual methods can limit growth and efficiency.