AI churn prediction helps businesses reduce customer turnover by analyzing user behavior, improving retention, and boosting revenue. However, this approach raises ethical concerns about data privacy, transparency, and potential misuse. Companies must collect vast amounts of personal data, often without clear consent, which can erode trust and attract regulatory scrutiny. Key challenges include:

- Transparency issues: AI models often act as "black boxes", making predictions hard to explain.

- Data misuse risks: Sensitive data could be used for intrusive practices or discriminatory pricing.

- Algorithmic bias: Poor training data may lead to unfair outcomes for certain demographics.

- Regulatory compliance: Laws like GDPR and CPRA demand stricter data protection and explicit consent.

Balancing profit-driven AI with privacy-conscious practices requires transparent communication, consent-driven data collection, and privacy-preserving technologies like Differential Privacy. Companies that address these challenges can retain customer trust, comply with regulations, and achieve sustainable growth.

How AI Churn Prediction Raises Ethical Questions

AI churn prediction works by analyzing customer behavior - things like login frequency, feature usage, email engagement, support interactions, and payment patterns - to identify trends that often precede cancellations. Machine learning models such as XGBoost or LightGBM assign a risk score (usually between 0 and 100) to active customers, flagging those likely to leave within 30 to 90 days. These systems boast impressive accuracy rates of 85% to 92% and have been shown to cut churn by 15% to 25%.

But this level of precision comes at a cost: collecting and analyzing a massive amount of customer data. It’s not just about what people buy - it’s about how they interact, when they engage, and even the tone of their support conversations. The table below highlights the types of data typically gathered and their predictive relevance:

| Data Category | Specific Data Types Collected | Predictive Power |

|---|---|---|

| Behavioral | Login frequency, session length, feature adoption depth/breadth | Very High |

| Support | Ticket volume, resolution time, sentiment analysis of ticket text | Very High |

| Engagement | Email open rates, attendance at check-in calls, referrals | High |

| Financial | Late payments, billing disputes, license utilization vs. headcount | High |

Transparency Challenges

One major ethical concern is the lack of transparency. These models often operate as "black boxes", making it difficult for both customers and internal teams to understand why certain individuals are flagged as at-risk. This lack of clarity raises questions about trust and accountability in decision-making processes.

Risks of Data Misuse

Another issue is the potential misuse of detailed customer data. Metrics like complaint frequency or peak usage times could be leveraged for practices like discriminatory pricing or excessive monitoring. Striking a balance between useful personalization and what critics might call "creepy overreach" is a delicate task. Moreover, companies must ensure they collect this behavioral data with explicit consent, as regulatory bodies increasingly demand greater transparency and customer permission.

Algorithmic Bias

Bias in AI models represents another significant ethical hurdle. If the training data is flawed or incomplete, the algorithm might unfairly assess risk for specific demographics, leading to discriminatory outcomes. This not only damages customer trust but could also undermine the effectiveness of churn reduction strategies. Regular audits and robust evaluation protocols are essential to avoid perpetuating biases or basing decisions on unrealistic data scenarios. Ultimately, these challenges highlight the importance of building AI systems that prioritize privacy, fairness, and trust.

sbb-itb-17e8ec9

Why Privacy and Trust Matter in AI Systems

Privacy and trust play a crucial role in encouraging customers to share data that powers effective AI systems. Yet, only 27% of consumers feel they truly understand how companies use their personal information. When trust is lacking, people may withhold data or provide inaccurate information, which can severely impact the performance of churn prediction models. Adding to this challenge are the ever-changing global data protection laws, which place stricter requirements on how companies handle personal data.

The regulatory landscape highlights these concerns. For instance, GDPR mandates explicit consent and enforces strict data minimization. Meanwhile, CCPA and its successor, CPRA, take a different approach by allowing data collection by default but requiring businesses to honor opt-out requests. Starting in 2026, these laws will also require companies to explain the logic behind automated decisions that impact consumers. Imagine a churn prediction model labeling a customer as "high-risk", which then limits their access to premium features or special pricing. Under these laws, businesses must not only explain the criteria behind such decisions but also give customers a meaningful way to opt out. Meeting these regulatory demands doesn’t just ensure compliance - it also strengthens customer trust, which is essential for effective churn prediction.

"In the AI era, trust is not a soft metric. It is the foundation everything else is built on." - Adelina Peltea, Chief Marketing Officer, Usercentrics

Companies are moving away from one-time consent models and focusing on building ongoing, trust-based relationships. Instead of overwhelming users with data requests at signup, businesses are introducing data-sharing decisions gradually, in step with the progression of the customer relationship. This "privacy-led UX" approach reduces friction, increases opt-in rates, and improves the quality of first-party data - directly benefiting the accuracy and reliability of churn models.

The stakes are even higher when AI systems infer sensitive information that users haven’t explicitly provided. For example, AI models can predict private attributes, such as financial difficulties or health conditions, with 80% accuracy based on subtle behavioral patterns. Often, users remain unaware that such inferences are being made. This highlights the importance of adhering to the principle of purpose limitation - data collected for one purpose, like customer service, shouldn’t be repurposed for churn analysis without clear consent. Protecting against these hidden inferences ensures not only user privacy but also the credibility of churn analytics.

1. AI Churn Prediction for Profit Maximization

Data Usage

AI churn prediction models rely on a variety of digital behavioral signals, such as mouse movements, email engagement, customer support interactions, payment patterns, and transaction histories. These models have come a long way - from relying on manual intuition in the early 2000s to employing advanced techniques like deep learning and AutoML between 2021 and 2025. Today, churn rates are recalculated in real time as AI systems continuously track and analyze customer behavior. Despite these advances, many organizations struggle with fragmented data systems and inconsistent definitions of what constitutes "churn." These challenges can lead even the most advanced AI tools to produce less-than-accurate predictions. That said, when implemented effectively, these capabilities can deliver measurable financial improvements.

Business Outcomes

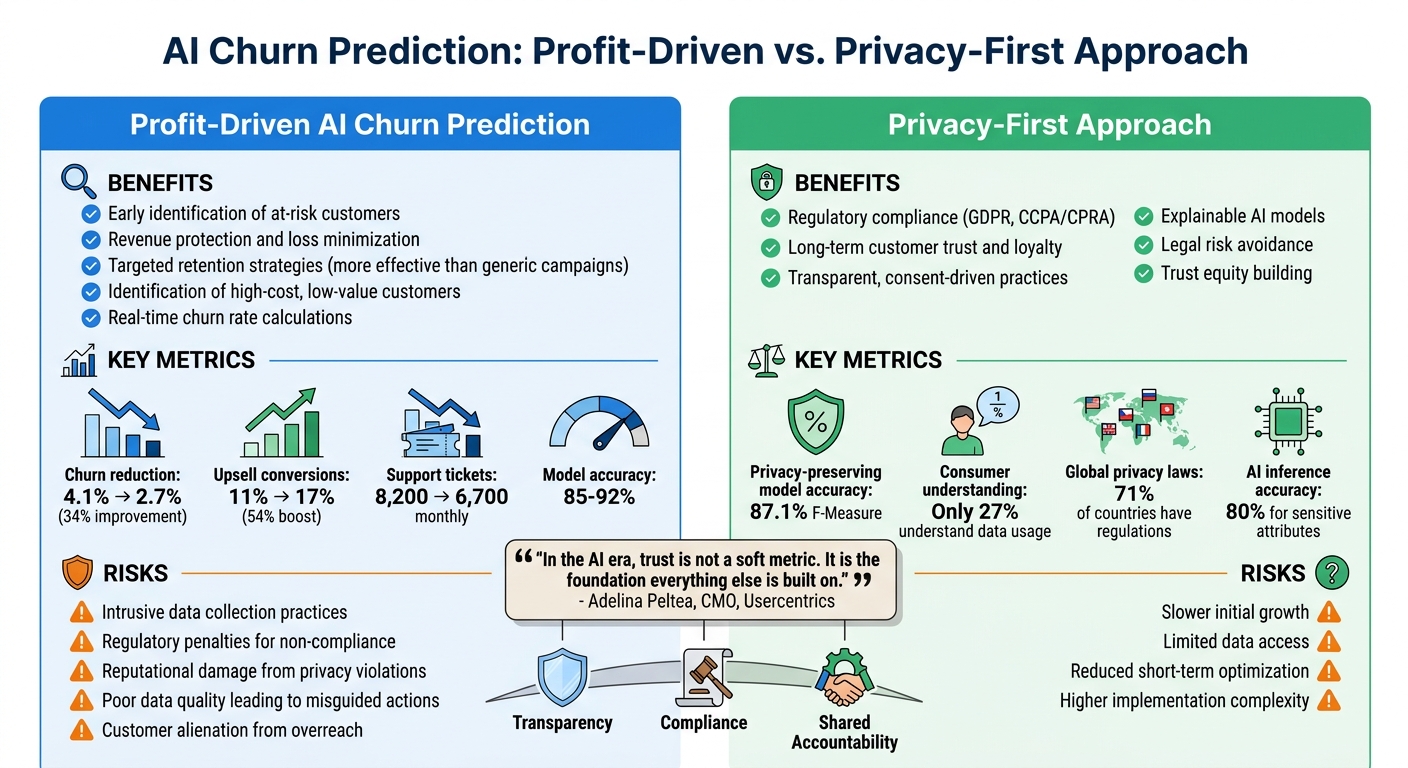

Using AI to predict and address churn has a direct impact on a company's bottom line. Consider this: industries like finance and credit lose an estimated $68.2 billion annually to customer churn, while cable and telecom companies face losses of roughly $41.6 billion each year. Companies that adopt AI-driven churn strategies have seen tangible results, including:

- A reduction in quarterly churn rates from 4.1% to 2.7% - a 34% improvement.

- An upswing in upsell conversions, jumping from 11% to 17%, representing a 54% boost.

- A drop in monthly support ticket volumes, decreasing from 8,200 to 6,700.

AI doesn't just help retain valuable customers; it can also identify high-cost, low-value, or problematic customers. Letting go of these customers can free up resources for more profitable clients and even improve team morale. As Sam, a retention lead at Agile Growth Labs, puts it:

"Sometimes, fixing your product is better than fixing your model."

While these strategies can significantly enhance profits, they also highlight the importance of balancing data optimization with robust privacy protections.

2. Customer Privacy and Trust Preservation

Data Usage

AI churn models depend heavily on behavioral data, but handling this data comes with serious privacy challenges. For example, training models on sensitive CRM data - like personal, financial, or browsing information - can expose companies to risks such as membership inference attacks. These attacks allow bad actors to extract individual customer information from trained models. Instead of abandoning AI, many businesses are rethinking their approach to data. Techniques like Differential Privacy and Generative Adversarial Networks (GANs) are gaining traction. These methods create synthetic training data that replicates customer behavior without exposing actual identities. This approach not only mitigates privacy risks but also reinforces customer trust. Notably, these privacy-focused techniques have shown impressive results, achieving up to 87.1% F-Measure in churn prediction.

Impact on Customer Trust

When customers feel manipulated, trust takes a hit. A clear example of this occurred in June 2023, when the FTC filed a complaint against Amazon for its "Iliad Flow." This design tactic made it unnecessarily difficult for Prime members to cancel their subscriptions. By steering users toward options like "Remind me later" instead of "Continue to Cancel", Amazon prioritized short-term retention over customer goodwill. While such tactics might temporarily reduce churn, they can cause long-term damage to a brand's reputation. Companies using AI to identify at-risk customers must avoid guilt-driven tactics like confirmshaming. Instead, they should focus on building trust through ethical and transparent practices.

Regulatory Compliance

Adhering to privacy regulations is no longer optional - it’s a business necessity. Under GDPR, companies must follow principles like data minimization, purpose limitation, and storage limitation. In the U.S., laws like the CCPA empower customers to know what data is being collected, object to its sale, and request its deletion. Globally, 71% of countries now have data privacy laws, yet only 34% of organizations have conducted thorough data mapping to fully understand their practices. A recent example of regulatory enforcement occurred in April 2023, when Italy’s Data Protection Authority temporarily banned ChatGPT over privacy concerns. OpenAI resolved the issue by implementing stronger transparency measures and a 30-day data retention policy for API users. Following such standards not only helps avoid fines but also strengthens a company’s reputation and revenue potential.

Business Outcomes

By embracing privacy-preserving technologies and transparent data practices, companies can achieve both regulatory compliance and customer loyalty. Protecting privacy doesn’t mean sacrificing profits - it’s about safeguarding them differently. Transparent, consent-driven data practices help avoid costly fines and PR disasters often referred to as "privacy ticking time bombs". Using Explainable AI (XAI) to clarify churn risk reasons can foster open communication with customers, building what researchers call trust equity. This long-term loyalty extends beyond individual transactions.

New approaches like Causal AI and Uplift Modeling help businesses identify "persuadables" - customers who might stay with the right intervention - while allowing an easy exit for those unlikely to return. This ensures trust is maintained, even when customers choose to leave. Ultimately, companies that use AI insights to improve customer experiences, rather than trap users with manipulative tactics, are setting themselves up for sustained success in the long run.

Pros and Cons

Profit-Driven vs Privacy-First AI Churn Prediction: Benefits and Risks Comparison

When comparing profit-driven AI churn prediction with a privacy-first approach, the trade-offs become clear. The decision isn't simple - it involves balancing benefits and risks that can significantly impact business outcomes.

On the profit-driven side, AI churn prediction excels at identifying at-risk customers early, protecting revenue and minimizing losses. By analyzing behavioral patterns - like mouse movements, customer support interactions, and delayed payments - businesses can step in before customers decide to leave. These targeted retention strategies are far more effective than generic campaigns, and AI can even pinpoint high-cost, low-value customers that might not be worth retaining.

But this approach isn't without challenges. Collecting vast amounts of customer data can cross the line from helpful to intrusive, potentially alienating the very audience you aim to retain. As Lena, an AI strategist at Comarch, warns:

"Everyone thinks AI will save them, but most are just playing catch-up".

Additionally, cutting corners on data consent can lead to serious consequences, including regulatory penalties and reputational damage. Poor-quality data feeding into AI models can also result in misguided actions - like targeting loyal customers with unnecessary or poorly timed interventions. Jamie, a product lead at Neural Technologies, shared their experience:

"We trusted the model, and it burned us".

On the other hand, a privacy-first strategy takes a different route. This approach prioritizes compliance with privacy regulations and focuses on gaining customer trust through transparent, consent-driven data practices. By using explainable AI models, companies can foster long-term loyalty while avoiding legal risks. Though it may lead to slower initial growth, this strategy emphasizes building trust equity - loyalty that goes beyond mere transactions. Researchers suggest that combining AI insights with a human touch can create genuine customer experiences that resonate over time. The goal isn't to rely on AI as a cure-all but to use it as a tool to enhance meaningful interactions.

Conclusion

Profit-driven AI and privacy-conscious practices can work together. Companies can achieve this harmony by creating systems that respect data privacy while delivering strong business outcomes through transparency, compliance, and shared accountability.

For AI-powered platforms like Lucid Financials, which handle sensitive financial data, this balance is especially important. Using methods like de-identified or aggregate analytics instead of raw data helps protect privacy while still enabling innovation.

Regulatory frameworks such as the CPRA establish key standards that safeguard both businesses and customers. Proactively communicating with users - for example, notifying them of privacy policy updates before they take effect - builds trust and allows users to make informed decisions. This approach aligns compliance with operational efficiency, strengthening trust throughout the customer experience.

When businesses upload employee or customer data into AI systems, they carry the ethical responsibility of obtaining proper consent. As noted in privacy frameworks:

"YOU ARE SOLELY LIABLE FOR PROTECTING THIRD PARTIES' AND YOUR OWN PRIVACY, AND FOR OBTAINING THE PRIOR CONSENT OF INDIVIDUALS WHOSE PERSONAL INFORMATION IS INCLUDED IN THE BUSINESS FINANCIAL INFORMATION."

By adopting transparent practices and implementing strong privacy safeguards, companies can foster growth while building trust. As discussed earlier, balancing AI-driven profit goals with careful privacy measures not only satisfies regulatory requirements but also strengthens customer loyalty over time.

Ethical AI doesn't mean choosing principles over growth - it means creating practices that support both business goals and customer expectations. Companies that strike this balance not only avoid legal risks but also earn the trust that fuels lasting loyalty and competitive success.

FAQs

What data should we avoid using for churn prediction?

Avoid relying on biased data, outdated metrics like NPS surveys or renewal notices, and sensitive demographic information such as zip codes, which could unintentionally expose racial details. Using these types of data can result in unethical predictions and damage customer trust.

How can we explain churn scores to customers?

Churn scores are a way to estimate how likely a customer is to stop using a service. They’re calculated by analyzing recent behaviors such as how often someone logs in, how they interact with key features, or how frequently they reach out for support. These scores usually fall between 0 and 1, with higher numbers pointing to a greater risk of leaving.

But here’s the thing: churn scores aren’t about labeling or judging customers. Instead, they offer valuable insights into what customers might need. By understanding these signals, businesses can step in early with tailored support, personalized solutions, or even new features to make sure customers continue to find value in the service. It’s all about building trust and creating a better experience.

What’s the safest way to use churn AI and still comply with GDPR/CPRA?

To use churn AI safely while staying compliant with GDPR and CPRA, there are several key steps to keep in mind:

- Practice data minimization: Only collect and process the data that is absolutely necessary for your churn AI objectives. Avoid gathering unnecessary or excessive information.

- Obtain explicit user consent: Make sure users clearly agree to how their data will be used. This consent should be informed, specific, and freely given.

- Ensure transparency: Clearly communicate how the AI processes data, what it does, and why. Users should understand how their information is being handled.

- Use privacy-preserving methods: Techniques like anonymization or pseudonymization can help protect user identities while still allowing for effective data analysis.

- Follow secure data handling practices: Implement robust security measures to safeguard data from breaches or unauthorized access.

- Adhere to legal transfer mechanisms: If data needs to be transferred across borders, ensure compliance with laws governing international data transfers.

- Document automated decision-making: Keep detailed records of how the AI makes decisions, especially when those decisions impact users. This transparency helps demonstrate compliance and builds trust with customers.

These practices not only help maintain compliance but also foster trust and confidence among users.